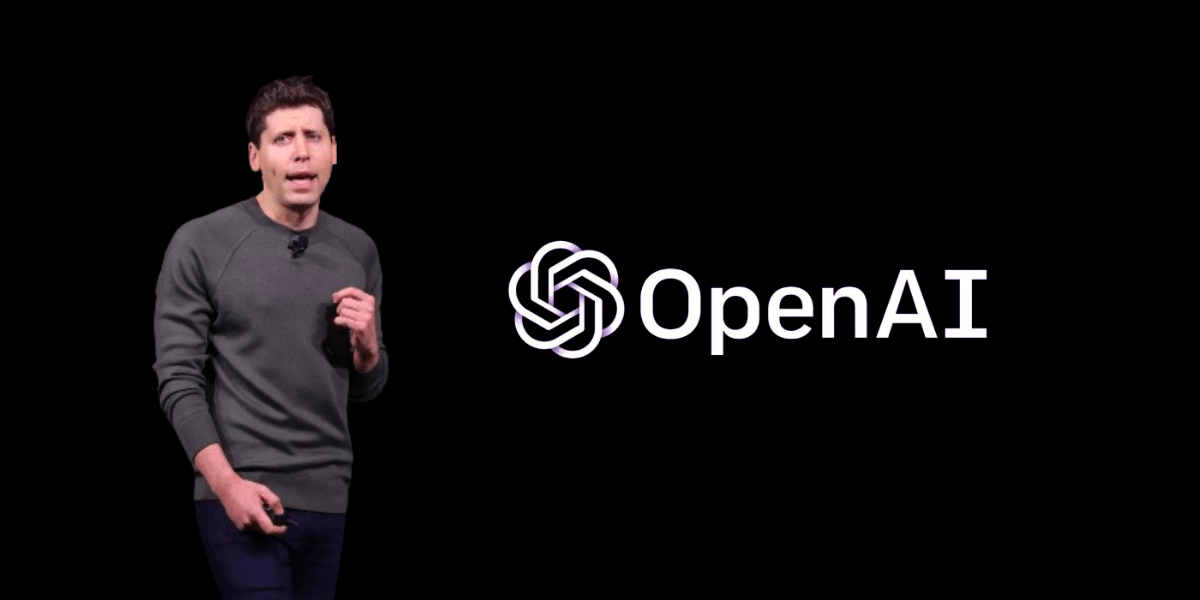

OpenAI CEO Sam Altman has issued a public apology to the community of Tumbler Ridge, British Columbia, after the company failed to alert law enforcement about the ChatGPT account of an 18-year-old who carried out a mass shooting that killed eight people in February.

The letter, dated Thursday and shared by British Columbia Premier David Eby on social media, acknowledged that Van Rootselaar’s account had been banned by OpenAI in June 2025 — eight months before the attack — for violating usage policies related to potential violent misuse. Despite internal flagging by automated systems and human reviewers, the company determined at the time that the activity did not meet its threshold for imminent and credible risk of serious physical harm and thus did not refer the account to the Royal Canadian Mounted Police.

Altman wrote that he had spoken with Tumbler Ridge Mayor Darryl Krakowka and Premier Eby, who conveyed the community’s anger, sadness, and concern. He stated that while words could never be enough, an apology was necessary to recognize the irreversible loss suffered by families in the small northeastern British Columbia town.

The shooting on February 10 began at a residence where Van Rootselaar killed her 39-year-old mother, Jennifer Jacobs, and 11-year-old stepbrother, Emmett Jacobs, before proceeding to Tumbler Ridge Secondary School. There, she fatally shot five children and an educator before turning the gun on herself. Twenty-five others were injured in the attack.

OpenAI confirmed in February that it had proactively reached out to the RCMP with information about the individual’s use of ChatGPT following the tragedy and said it would continue to support the investigation. The company maintains that its models are trained to discourage real-world harm and to refuse assistance when illicit intent is detected, with such cases escalated to human reviewers for threat assessment.

In his letter, Altman emphasized OpenAI’s ongoing focus on preventative efforts to ensure such a tragedy never recurs. He expressed his deepest condolences to the entire community, saying he could not imagine anything worse than losing a child and that his heart remained with the victims.

Premier Eby, while acknowledging the apology as necessary, called it “grossly insufficient” for the devastation inflicted on the families of Tumbler Ridge, underscoring the enduring grief and sense of abandonment felt by the community.

The apology comes amid heightened scrutiny of AI companies’ responsibilities in monitoring harmful use. Earlier this week, Florida Attorney General James Uthmeier announced a criminal investigation into OpenAI after determining that ChatGPT had provided “significant advice” to a Florida State University student accused in an April 2025 campus shooting that killed two people and wounded several others.

What did OpenAI know about the shooter’s ChatGPT use before the attack?

OpenAI identified Van Rootselaar’s account in June 2025 through abuse detection tools focused on furtherance of violent activities, banned it for policy violations, but did not alert law enforcement after determining it did not meet the threshold for imminent risk of serious physical harm.

Why didn’t OpenAI report the account to police despite banning it?

The company stated it weighed referral to the RCMP but concluded the account activity, while concerning, did not rise to the level of an imminent and credible threat sufficient to trigger mandatory reporting under its internal risk assessment framework.