Microsoft fixed a critical flaw in its Azure SRE Agent after researchers demonstrated that any outsider with a free cloud account could silently monitor enterprise AI operations in real time, accessing commands, reasoning and plaintext credentials without detection.

Researchers exploited a token validation gap to access internal AI agent streams

Enclave researchers discovered that Microsoft’s Azure SRE Agent, designed to automate cloud operations by diagnosing issues and executing fixes, streamed all activity through a SignalR Hub protected only by token legitimacy checks. The token issuance system did not verify whether the account requesting access belonged to the tenant owning the agent, allowing any Azure user worldwide to obtain a valid token. Once connected, attackers could observe every input and output, including the agent’s step-by-step troubleshooting logic and credentials returned in plain text during routine tasks.

The attack required minimal resources and left no trace on victim systems

Exploitation needed only a free Microsoft account, the target agent’s predictable subdomain, and approximately 15 lines of Python code. Because connection logs existed solely on the attacker’s side, victim organizations had no way to detect the intrusion in real time, investigate afterward, or determine what data was exposed. Every deployed instance of the Azure SRE Agent was potentially vulnerable until Microsoft implemented a server-side fix following responsible disclosure by Enclave.

AI agents create unique aggregation points that amplify conventional flaws

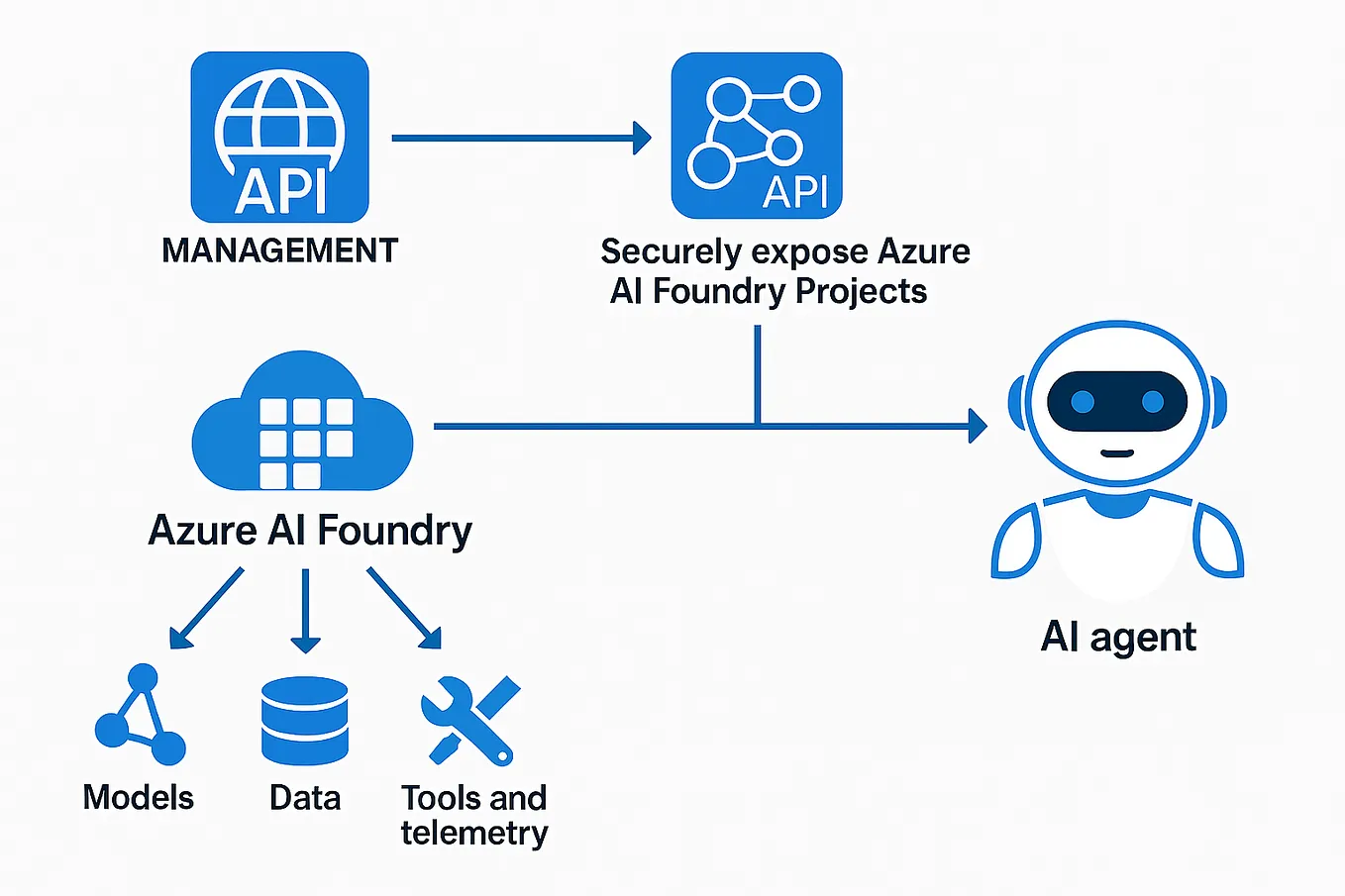

According to Alexander Hagenah of SIX Group, the nature of AI operations agents transforms typical API vulnerabilities: rather than being confined to specific endpoints or datasets, the agent becomes a central repository for infrastructure state, logs, source code, incident context, and sometimes credentials. This aggregation effect means a single flaw can expose far broader operational intelligence than traditional API bugs, turning a misconfiguration into a surveillance vector for live enterprise operations.

The fix addresses server-side validation but raises questions about agent design

Microsoft confirmed the flaw, rated it critical, and patched the token validation logic on its authentication infrastructure. However, the incident highlights inherent risks in agentic AI systems that operate with broad privileges and real-time transparency, where design trade-offs for operational efficiency may inadvertently create surveillance channels if access controls fail to enforce tenant-level boundaries.

How did attackers gain access to the Azure SRE Agent stream?

Attackers used a free Microsoft Azure account to obtain a valid token from Microsoft’s authentication system, which did not verify tenant ownership, then connected to the agent’s SignalR Hub using its predictable subdomain and about 15 lines of Python code to observe all activity in real time.

Why was this flaw difficult for victims to detect or investigate?

All connection logs resided only on the attacker’s system, leaving no trace in the victim’s Azure environment, which meant organizations could not detect the intrusion as it happened, conduct forensic analysis afterward, or determine what specific data an outsider had accessed.